The Enterprise AI Brief | Issue 8

May 4, 2026

The Enterprise AI Brief | Issue 8 Inside This Issue...

Read MoreUse GenAI with confidence without compromising

security, traceability, or auditability

Policy enforced before the model sees anything

PromptVault intercepts every prompt and enforces policy in real time, detecting and tokenizing sensitive data so raw information never leaves your enterprise. This proactive control layer eliminates exposure to external GenAI platforms while allowing copilots to reason safely over confidential financial and enterprise data.

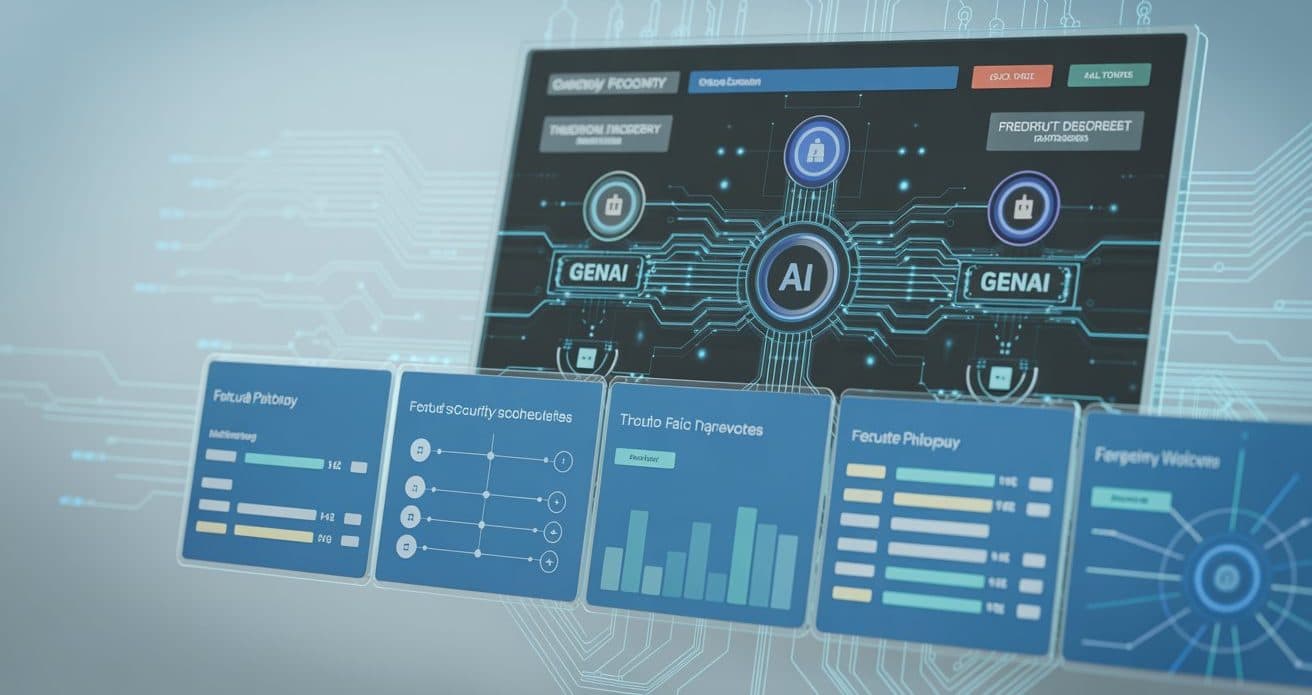

Every AI interaction, fully accounted for

Gain complete traceability across GenAI usage with detailed logging of every prompt, response, data decision, and policy action. See exactly who accessed what, when, and why—eliminating blind spots from shadow AI or unauthorized interactions.

Compliance becomes provable, not assumed

Immutable audit trails capture every GenAI interaction end-to-end, providing verifiable proof for regulators, auditors, and internal reviews. PromptVault's analytics surface governance adherence, operational performance, and risk trends—turning compliance into defensible evidence, not interpretation.

The governance layer that says yes to AI

PromptVault applies granular, context-aware policies so only authorized users see raw sensitive data while others work safely with anonymized tokens. This governance layer works across multiple GenAI platforms, enabling teams to move faster without sacrificing security, compliance, or jurisdictional alignment.

A user submits a prompt containing sensitive data.

PromptVault detects sensitive values and replaces them with tokens.

The tokenized prompt is securely sent to the LLM.

When the LLM responds, PromptVault applies role-based rules to determine what information is revealed in the response.

Dashboards and reports surface every action and decision.

May 4, 2026

The Enterprise AI Brief | Issue 8 Inside This Issue...

Read More

March 10, 2026

The Enterprise AI Brief | Issue 7 Inside This Issue...

Read More

February 20, 2026

Why Feature Comparisons Fail for GenAI Security A Control-Surface Framework...

Read More

February 19, 2026

The Enterprise AI Brief | Issue 6 Inside This Issue...

Read More

July 17, 2025

Why Data Governance is More Critical Than Ever in 2025?...

Read MorePromptVault prevents sensitive data from being exposed when using AI systems by detecting, tokenizing, and securely vaulting sensitive information before it reaches an LLM.

Because sensitive data is still transmitted to the provider before filters are applied. PromptVault ensures sensitive data never leaves the enterprise boundary in raw form.

No. PromptVault allows prompts to remain usable by replacing sensitive values with tokens rather than blocking the request.

Only users or systems explicitly authorized by policy can detokenize and view original values.

Yes. PromptVault is model-agnostic and can be used with hosted or internal LLMs.

Sensitive data is stored only in the encrypted vault and retained according to configurable retention policies.

By enforcing access controls, maintaining audit logs, and preventing unauthorized data exposure, PromptVault supports compliance with regulations such as HIPAA, GDPR, PCI DSS, and SOC 2.