The LLM Security Gap

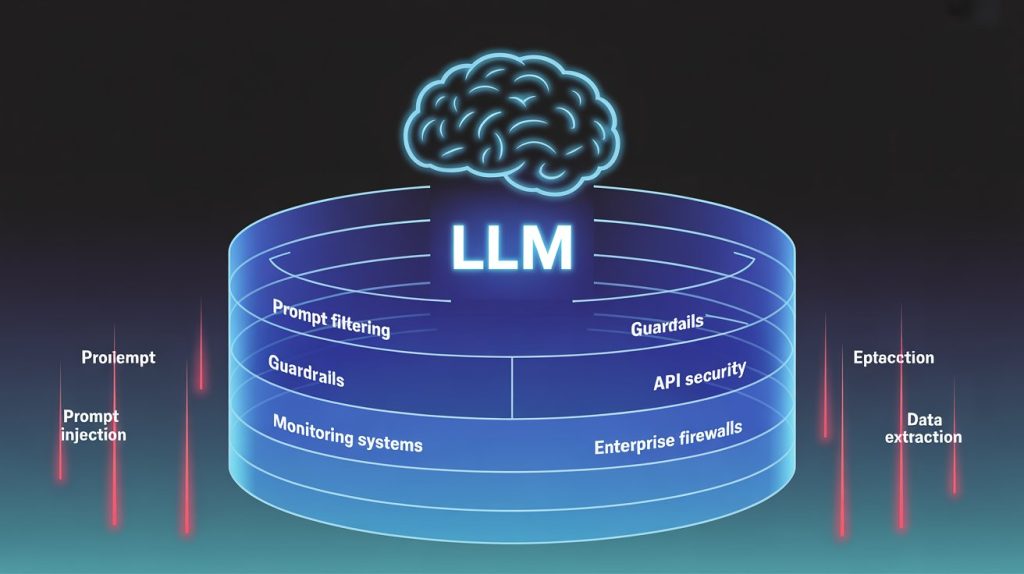

The LLM Security Gap The LLM Security: Why Blocking Isn’t Protection, and What Enterprises Actually Need Gap Executive Summary Large Language Models are transforming enterprise productivity. They’re also creating a data security problem that existing tools weren’t designed to solve. The instinct is to block. Restrict LLM access. Sanitize everything. But blocking doesn’t protect; it just pushes the problem underground. Employees route around restrictions. Shadow AI proliferates. The data leaks anyway, just without visibility or audit trails. Meanwhile, the tools marketed as “LLM security” fall into two failure modes: they either break workflows (making LLMs useless for real work) or fail silently (letting sensitive data through while appearing to work). This whitepaper explains: The bottom line: Enterprises need to let authorized people work with sensitive data in LLM workflows, while preventing unauthorized exposure and maintaining audit trails. That’s a different problem than “block all sensitive data from LLMs,” and it requires a different solution. The Problem in Plain Language Every day, employees use LLMs with sensitive data: fraud analysts investigating customers, compliance officers reviewing filings, support agents drafting responses, lawyers analyzing contracts. This isn’t misuse. This is the use case. LLMs are valuable because they work with real business contexts. An AI that can’t see the customer’s complaint can’t help draft a response. An AI that can’t see transaction history can’t identify fraud patterns. The question isn’t whether sensitive data will enter LLM workflows. It will. The question is: what controls exist when it does? What Buyers Get Wrong Today Wrong Assumption #1: “We’ll just block LLMs.” Some enterprises restrict LLM access entirely. No ChatGPT. No Copilot. No AI tools. Why this fails: Blocking doesn’t eliminate risk. It eliminates visibility into risk. Wrong Assumption #2: “Sanitization solves it.” Other enterprises deploy sanitization tools: scan prompts, masks, or redact sensitive data before it reaches the LLM. Why this fails: Sanitization protects the LLM from your data. It doesn’t protect your data while letting people use it. Wrong Assumption #3: “Anonymization is enough.” Some enterprises anonymize data before LLM processing: replace real names with fake ones, remove identifiers. Why this fails: Anonymization is the right tool for the wrong job. Wrong Assumption #4: “Our existing DLP handles it.” Traditional DLP tools monitor network traffic, email, and file transfers. Some assume these cover LLM workflows. Why this fails: DLP protects perimeters. LLM security requires protecting data within workflows. Why Current Tools Fail Silently The most dangerous failure isn’t the one that breaks your workflow. It’s the one that appears to work. Silent failure means sensitive data escapes protection, and no one knows. How this happens: An auditor asks: “Prove no customer SSNs were exposed to the LLM last quarter.” With sanitization tools, the honest answer is: “We can prove what we detected. We cannot prove what we missed.” That’s not compliance. That’s hope. The Regulatory Pressure Regulators are paying attention. LLMs create new data exposure vectors that existing frameworks didn’t anticipate, but existing obligations still apply. GDPR / Privacy: Data minimization, purpose limitation, right to access/erasure. Internal LLM workflows often require identifiable data, triggering all usual obligations. HIPAA: Minimum necessary, audit controls, business associate agreements. Sanitization blocks PHI but also blocks clinicians from legitimate care purposes. Financial (SOX, PCI-DSS, GLBA): Access controls, audit trails, data retention. Can you demonstrate segregation of duties when AI is involved? Blocking LLMs doesn’t eliminate regulatory risk. It means employees use uncontrolled channels instead. The Enterprise Risk Beyond compliance, LLM security gaps create direct business risk: The Operational Friction Security controls that break workflows aren’t just inconvenient. They’re counterproductive. When security tools block legitimate work: The goal isn’t to prevent all access to sensitive data. It’s to enable authorized access with appropriate controls. What Enterprises Actually Need Enterprises successfully deploying LLMs have figured out something: the problem isn’t preventing access, it’s governing access. Governed access means: This is the model that actually works: protection without disruption for authorized work. What this looks like in practice: A fraud analyst and a marketing intern both submit prompts containing a customer’s SSN. Same data. Same LLM. Different outcomes. The fraud analyst’s role is authorized for SSN access. The system recognizes this, allows detokenization, and the analyst sees the real SSN in the response. Workflow continues. Investigation proceeds. The marketing intern’s role is not authorized. The system recognizes this, denies detokenization, and the intern sees a meaningless token instead of the SSN. They can’t access data they shouldn’t have. But the analyst sitting next to them can. Same prompt. Same data. Same system. Different access based on role. Both workflows continue appropriately. That’s governed access. Why the Market Isn’t Solving This Yet The GenAI security market is young. Most solutions were adapted from adjacent problems rather than built for this one. Gap 1: No Multi-Modal Governed Access Solutions exist for text, but enterprise data lives in images, PDFs, and audio. A tool that protects text but ignores screenshots isn’t comprehensive. Gap 2: Agentic AI Is Uncharted Territory LLMs are evolving from chat interfaces to autonomous agents that take actions, call APIs, and chain decisions. Security models designed for single prompts don’t address multi-step workflows. Agents break the assumptions current tools rely on: Prompt-level controls evaluate a single input at a single moment. Agentic workflows require access decisions that persist, adapt, and audit across an entire task. Gap 3: Detection Accuracy Is Unverified Vendors claim high detection rates. Few publish benchmarks. Buyers are taking accuracy on faith. Gap 4: No Standard Audit Format Every solution logs differently. No industry-standard format for LLM security audit trails exists. Gap 5: Role-Based Access Is Rare Most tools are binary: block or allow. Few support “allow for this role, with this purpose, for this time window.” Gap 6: Prompt-Only Security Is Insufficient Many “AI firewall” solutions focus on scanning prompts for malicious input: jailbreaks, injection attacks. This matters, but it’s the wrong problem. The primary risk isn’t malicious users crafting adversarial prompts. It’s legitimate users doing legitimate work with sensitive data. Prompt scanning can’t distinguish authorized access from unauthorized access. It treats all sensitive data as a threat. The problem isn’t malicious input; it’s governing legitimate access. The Decision Framework When evaluating LLM security, ask these questions. If you don’t like the answers, you’re looking at a tool that will fail in production. 1. Does it preserve workflow for authorized users? If the tool breaks legitimate work, users will work around it. Security that gets bypassed isn’t security; it’s theater. Red flag: “All sensitive data is blocked/sanitized regardless of user role.” 2. Does it support role-based access? Different users have different authorization levels. A tool that treats a fraud analyst and a marketing intern the same way doesn’t fit enterprise governance. Red flag: “Access decisions are based on data type, not user authorization.” 3. Does it produce audit evidence