NIST Launches Initiative to Define Identity and Security Standards for AI Agents

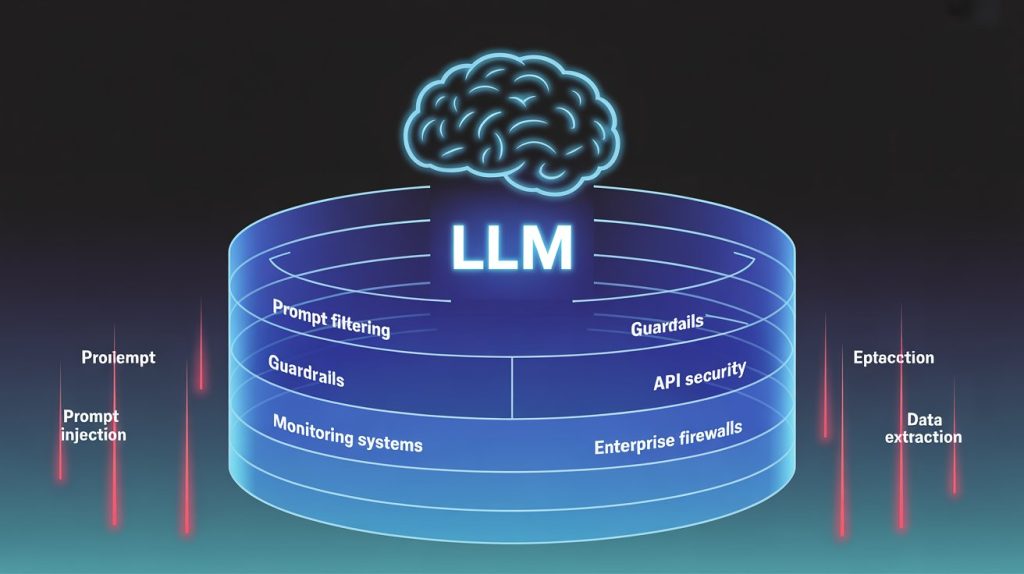

NIST Launches Initiative to Define Identity and Security Standards for AI Agents Here is a scenario playing out in enterprises right now. An AI agent is deployed to automate routine work: scheduling meetings, querying databases, updating tickets, and calling external APIs. It operates across internal systems, makes decisions about which tools to use, and executes actions on behalf of users. Then an auditor asks a simple question: who authorized the agent to access those systems, and how can its actions be traced? For most organizations today, there is no consistent answer to that question. NIST wants to change that. The Problem With How We Think About Software Identity Traditional software identity models were built around clearly defined applications and services. You have a service account, a set of permissions, and an audit log. The boundaries are known. AI agents break that model. These systems don’t just execute predefined tasks. They operate with a degree of autonomy, deciding which tools to call, which APIs to query, and which actions to take in sequence. An agent handling a user request might touch five internal systems and two external APIs in a single session, none of which were explicitly anticipated when the agent was deployed. That creates a governance gap that existing identity and access management frameworks weren’t designed to address. Who, or what, is the agent? What is it authorized to do? If something goes wrong midway through a chain of actions, where does accountability land? These are operational questions that enterprises deploying agent systems are already encountering. What NIST Announced In February 2026, NIST’s Center for AI Standards and Innovation launched the AI Agent Standards Initiative, a coordinated program to develop security, identity, and interoperability standards for autonomous AI agents. The initiative is organized around three areas of work. The first is facilitating industry-led standards development and maintaining U.S. participation in international standards bodies working on AI agent specifications. The second is fostering open source protocol ecosystems for agent interoperability, supported in part through research programs like the National Science Foundation’s work on secure open source infrastructure. The third focuses on foundational research in agent security and identity: authentication models, authorization frameworks, and evaluation methods for autonomous systems. Two early deliverables are already in progress. NIST issued a Request for Information on AI Agent Security, with responses due March 9, 2026, seeking ecosystem input on threats, mitigations, and evaluation metrics. Separately, the National Cybersecurity Center of Excellence published a concept paper titled Accelerating the Adoption of Software and AI Agent Identity and Authorization, open for public comment until April 2, 2026. Sector-focused listening sessions covering healthcare, finance, and education are planned for April. The Four Technical Problems NIST Is Trying to Solve Agent identity. The concept paper explores treating AI agents as distinct entities within enterprise identity systems, with unique identifiers and authentication mechanisms similar to how service accounts or non-human identities are managed today. This would give agents a traceable presence in the systems they interact with, rather than operating invisibly under a user’s credentials or a shared service account. Authorization and access control. Scoping agent permissions is harder than it sounds. An agent authorized to read a database shouldn’t necessarily be authorized to write to it, even if the underlying task seems to require it. The concept paper examines how existing IAM standards could be extended to support more granular, dynamic permission models for agents operating across multiple systems. Action traceability and logging. If an agent takes a sequence of actions across several systems in a single session, organizations need logs that can reconstruct what happened, in what order, and under what authorization. This is foundational for security monitoring, incident response, and audit. Without it, agentic systems are effectively a black box from a governance perspective. Interoperability and protocol design. Agents built on different platforms need consistent ways to communicate with tools and services. Without shared protocols, every enterprise deployment becomes a bespoke integration problem, and security practices fragment across vendors. NIST plans to engage industry and open source communities to identify barriers and support shared technical approaches. Why This Matters for Enterprise Teams The initiative is early-stage, but the direction is clear: AI agent architecture is going to be subject to standardization pressure, and the technical decisions being made now will influence what those standards look like. A few practical implications worth tracking: Non-human identity management is becoming a first-class problem. Enterprises are already managing service accounts, API keys, and OAuth tokens. Agents add a new layer of complexity because their behavior is less predictable than traditional software. IAM teams will likely need to think about agent identities the same way they think about privileged service accounts, with tighter scoping and more aggressive monitoring. Audit requirements are coming. Whether driven by regulation or internal governance, detailed logs of agent actions are going to become a baseline expectation for any enterprise deploying autonomous systems in sensitive environments. Building that logging infrastructure now is easier than retrofitting it after an incident. Fragmentation is the near-term risk. Until interoperability standards mature, enterprises integrating agents from multiple vendors are effectively building on unstable ground. The NIST initiative signals that this will eventually be addressed, but the timeline for stable, broadly adopted standards is measured in years, not months. The Honest Limitations The initiative is a coordination and research effort, not a standards release. The technical models for agent identity and authorization are still being shaped by the RFI and comment process. How quickly interoperable protocols will emerge, and how broadly they will be adopted across vendors and platforms, is genuinely uncertain. What NIST is doing is establishing the organizational and research infrastructure to make standardization possible. For a governance gap this foundational, that is probably the right starting point. But enterprises deploying agents today are doing so ahead of the standards, which means enterprises deploying agents today must design their own governance and control approaches. Further Reading NIST Center for AI Standards and Innovation: AI Agent Standards Initiative announcement NIST NCCoE